For many years I’ve run with two different DNS configurations. The main lab in the co-lo used Windows DNS because I ran an Active Directory domain and getting Windows to integrate successfully with a Linux-based DNS was a pain. At home, I run BIND for local device resolution and general Internet forwarding. This DNS environment runs with DHCP on a dedicated paperback-sized Lenovo server.

Master/Slave

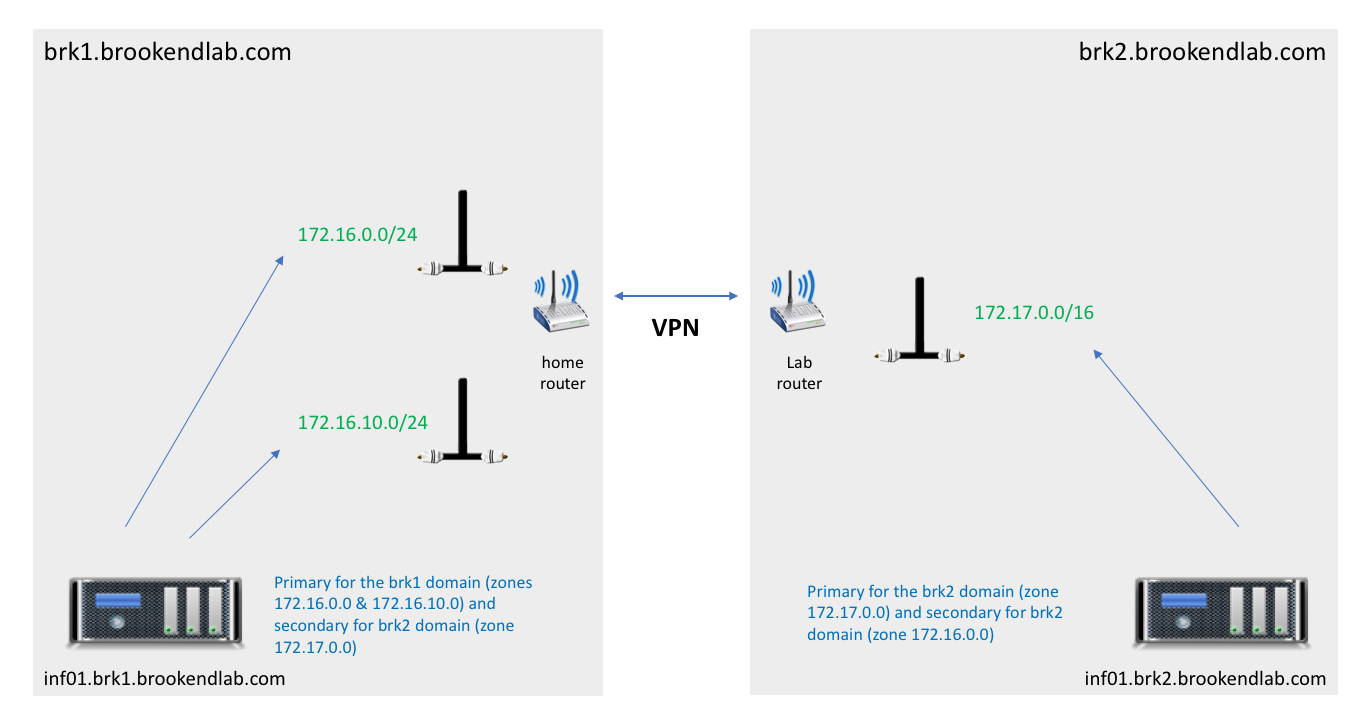

One aspect I’ve been keen to get fixed was the idea of a master/slave configuration. The main lab servers are on the 172.17.0.0 network(s) and local devices are either 172.16.0.0 or 172.16.10.0. A single DNS server could work but isn’t practical if the lab VPN link goes down. So, I’ve dumped the Windows AD domain and deployed local DNS in the lab using BIND. Each DNS server (one in each location) is now a local master and slave for the other location.

Within DNS, each location has a sub-domain – BRK1 for the devices at home and BRK2 for those in the main lab. Resolution is managed locally, with two zones at home – one for personal devices, one for lab work.

Nomenclature

I agonised over whether to have two separate short names for the DNS servers in each location. In the end I decided to go with the same name and use FQDNs to reference either of them. In each domain, any short query finds the local name first anyway. I expect there are recognised standards for this, but I simply wanted names I could easily remember.

I’ve also removed site-specific names (previously device names included either BRK1 or BRK2) as this is superfluous using the sub-domains.

Forwarding

Both DNS servers provide two services. They resolve internal device names and forward unknown DNS requests out for external resolution. The “forwarders” parameter sets onward resolution via 1.1.1.1 and/or 1.0.0.1, the DNS from CloudFlare. The “allow-transfer” setting permits the alternate DNS server from each site to be authorised to receive alternate domain details.

Zones

DNS entries for each segment of the network are stored in Zone files, which in BIND are typically in /etc/named. Each zone has a forward and reverse lookup. There are also other files relating to dynamically added DNS entries, through nsupdate command. Through nsupdate, DNS entries are added dynamically using local journal files and eventually updating the main configuration files. I’ll cover these in a separate post as I show how I’m using temporary DNS names for virtual instances.

rndc

One final comment – the rndc command provides the ability to do zone refreshes across site. It’s also needed to “freeze” and “thaw” zones that are using dynamic update, otherwise updating the configuration files manually will hit problems.

The Architect’s View

DNS needs to be accurate to ensure that certain software platforms (most notably Kubernetes) will work correctly. I’ve had some problems with k3s (more in another post) that pushed me to get DNS correctly sorted.

I should of course remind readers that this is a lab environment, things are in a constant state of flux and there will be mistakes in the configurations (which you can find on GitHub). That’s all part of the fun of a lab environment!

Copyright (c) 2007-2020 Brookend Limited. No reproduction without permission in part or whole. Post #f31a.