VMworld, VMware’s flagship user conference was held this week in Las Vegas, USA. One of the main announcements was the eagerly awaited VMware Cloud On AWS (VMC) moving to general availability. VMC provides the customer experience of VMware software running on AWS hardware in the public cloud. It potentially removes the need to run on-premises hardware at all, although it’s unlikely this is the intended customer target. Instead, VMware is looking to stay relevant in the move to public cloud by offering a hybrid solution. So what’s available, what do we know so far and is this a game changer for VMware and the industry?

The Offering

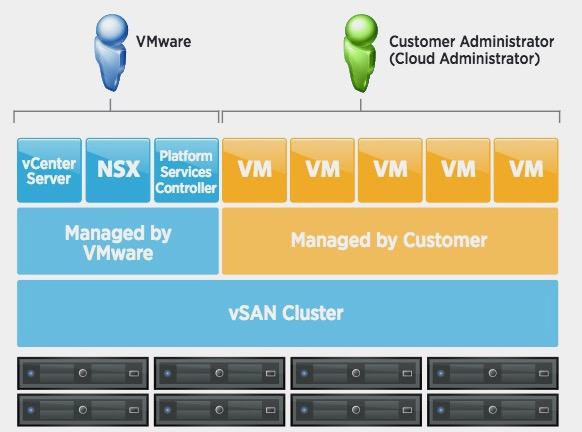

VMware Cloud on AWS, or VMC is an AWS-hosted implementation of VMware’s Cloud Foundation software. Cloud Foundation is a package of vSphere, NSX and Virtual SAN with a new interface called SDDC Manager. The idea of Cloud Foundation is to provide a single platform that covers both on and off-premises deployments with a single look and feel. Cloud Foundation is being offered through both IBM and AWS, although the AWS implementation has gained the most attention. VMC is now available through VMware, running on AWS hardware (more on that in a moment), initially on a per-hour charge with one and three year discounted pricing due soon. The service is only currently offered through the AWS US West 2 (Oregon) data centre, however there are plans to expand to other locations.

Hardware Specifications

Although a public cloud offering, VMC is very hardware specific. Servers are dedicated to each customer, with a single specification (per server):

- Dual 18-core Xeon E5-2686 v4 processors (14nm Broadwell architecture) @ 2.3Ghz

- 512GB DRAM

- 10TB raw storage for Virtual SAN (2x four NVMe SSD disk groups, with 1.7TB cache device and 5.1TB capacity, eight drives in total)

Storage

A minimum of four hosts are required for vSAN resiliency and to provide for redundancy in the case of a single server failure. vSAN is configured as RAID-1 but can use RAID-5 or RAID-6 (with 6 hosts). The idea of dedicating hardware seems to be at odds with the concept of public cloud, however bearing in mind the size of these hosts, it’s difficult to see how they could be anything but dedicated, as you’re looking at configurations bigger than the largest AWS M4 instance and probably a standard deployment unit for AWS. It will be interesting to see how VMware/AWS introduce other physical configurations, including what’s practical and cost effective from a scaling perspective. I’m sure AWS would prefer highly configured servers in their data centre, rather than lots of lower powered ones and I wonder whether nesting/virtualisation has been considered (nesting isn’t currently supported on VMC).

Networking

Networking is provided through NSX and appears to be indirectly isolated from AWS networking, or “decoupled” as the documentation puts it. Connectivity is established with layer 3 VPNs, one for management and one for VM data.

Deployment

The actual deployment of a cluster (assuming you have an account) looks quite straightforward and is achieved online using a VMware portal and AWS CloudFormation. Customers simply select the number of hosts required and some initial networking settings and the environment is built automatically, albeit with around a 2 hour wait. This timescale seems a little long, bearing in mind an ESXi host and tools can be built and deployed easily in a much shorter time, however it may be that VMware wants to manage expectations and so is setting a more realistic timescale.

Support

VMware is the main contact point for VMC, managing and supporting deployments. This means potential customers can’t simply log into AWS and order a VMC cluster using a credit card. I expect this stance has been taken to minimise the cost of implementing the service. It’s easy to manage the churn of virtual instances that can be hosted on one of many physical servers, but when the deployment is an actual server, the downtime of any particular piece of hardware could be considerable as customers try out the service before fully committing. VMware and AWS would have to seed a datacentre with way more hardware than they might want, simply to cope with the turnover of short-lived VMC setups, making the chargeable hours for each server lower than desirable. We’ll discuss support from VMware in a moment.

Pricing

Probably the most keenly awaited piece of news will have been that of pricing. VMware are quoting a rather specific $8.3681 per hour per host on-demand charge. That’s $6,109 per month, assuming 730 hours in the month, or $24,436 per cluster. Over the course of a year, the cost is a shade under $300,000 for a 4-node VMC. Although not currently available, 1-year and 3-year reserved pricing will be available, offering a 30% and 50% discount respectively.

I wonder if potential customers will be surprised at the cost of VMC, compared to an on-premises solution. Remember that this charge is from VMware only and doesn’t include any AWS networking charges (such as bandwidth or IP addresses). VMware quotes some TCO comparisons (which are not externally validated), putting the price of VMC on a par with native cloud instances and some 40% cheaper than traditional on-prem. I’d question this, because headcount won’t reduce that much (or at all, if on-prem still exists) and so is a sunk cost. The only real saving here is in capex on new hardware.

However, compare a single VMC node to an AWS M4 instance and the cost is not unreasonable. The largest M4 instance available is $3.20/hour and probably half a VMC server. So at that rate, the margin for VMware and AWS is around $2/hour, which as also to include software licenses. This is before any discounts, so I can see VMware offering some movement in price as customers choose between renewing their hardware or moving to the cloud. There’s a natural tendency to think leased charges are less value for money than purchasing hardware, however servers have little or no residual value after 3 years. The only possible benefit is in sweating an asset longer than the expected amortisation period, although further maintenance costs are ususally incurred anyway.

Support (Again)

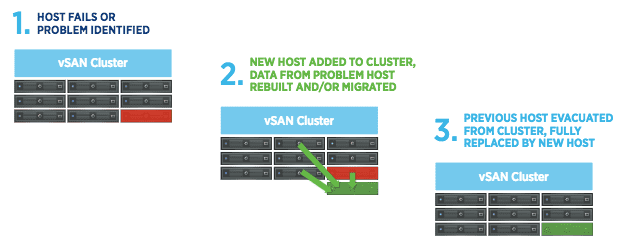

As mentioned, VMware themselves are the main point of contact for VMC, including fault management and resolution. This also means configuring certain components (like NSX and the vCenter Server configuration) are not available to the end user. In the event of hardware or server failure, VMware automatically places servers into maintenance mode and/or takes nodes out of service. Some of the documentation indicates that servers are automatically rebooted where necessary. Both vSphere DRS and HA are enabled by default, however from what I’ve read Fault Tolerance isn’t. Note also that currently a VMC cluster exists in a single data centre and availability zone, however clusters spanning availability zones is a future enhancement.

For many potential customers, I think the handoff between customer and VMware support will be a challenge and require some thinking. Theoretically, VMware will schedule node upgrades where required (moving hosts with vMotion to accommodate this) and proactively add and remove nodes from a cluster to fix hardware errors. Where does this boundary start an end? For example, if an SSD has transient errors, or a single NIC fails on a host, will the downtime simply happen on that node/device or be scheduled with the customer?

Data Mobility

Customers can move virtual machines to VMC either through the VMware Content Library, accessing ISOs, OVAs and scripts from an on-premises library to build new images. Alternatively, existing virtual machines can be cold-migrated (which presumably means migrated while powered down). Cold migration is enabled through Hybrid Linked Mode, which also serves to enable a single management interface to amalgamate configuration data from on/off premises configuration. Cross-cloud vMotion is offered as a future enhancement.

It’s not at all clear to me how a VMC cluster can access existing AWS features, although it is implied that it can be done. It looks like VMC<->AWS will operate no differently than an on-premises vSphere deployment with AWS, except with much lower latency. I don’t see any details of application or data integration between service offerings. This presumably means customers will still have to do their own data management between platforms, including all of the overhead that implies.

The Architect’s View™

It would be fairer to describe VMC as more of a managed service offering than true public cloud as there is such a focus on the hardware. VMC 1.0 (if we can describe it like that) doesn’t seem to offer much in terms of AWS integration. I don’t see anything in the AWS documentation that even references VMware. However maybe that will be more apparent once the service is up and running. Without giving VMC a whirl, that one remains an open question. I don’t suppose VMware wants to make it easy for customers to jump ship, however over time, and as customers mature in their consumption of cloud, I think there will be a desire to have more data interoperability.

To be fair, this is early days for VMware in this instantation of public cloud. Data mobility still needs to be addressed, because until VMs and their data can easily be moved on/off prem and into the public cloud then VMC is simply vSphere on a server in another data centre. The real future value for customers is interoperability, mixing and matching services and data on demand, but VMC is a good start.

Further Reading

- VMware Cloud on AWS (VMware website, retrieved 31 August 2017)

- VMware Cloud Foundation (VMware website, retrieved 31 August 2017)

- VMC Technical Overview (PDF, VMware website, retrieved 31 August 2017)

Comments are always welcome; please read our Comments Policy. If you have any related links of interest, please feel free to add them as a comment for consideration.

Images used in this post are copyright (c) VMware.

Copyright (c) 2007-2021 – Post #805D – Brookend Ltd, first published on https://www.architecting.it/blog, do not reproduce without permission.