Remember the early days of AWS and S3? Price reductions used to be announced every year, sometimes twice a year, as the cloud service providers fought for market share. After 13 years of object storage in the Amazon cloud, are we still seeing price reductions? More importantly, are the price reductions in the hardware itself being passed on to customers?

Price Trends

Over the past 50-60 years of the IT industry, I think it would be a reasonable supposition to say that the price of technology has declined year on year. This isn’t necessarily in absolute terms, but based on a “unit” of compute, price drops have been huge and unlike any other market.

Part of that trend has been the insatiable desire to achieve the “next big thing” – faster processors; larger capacity hard drives; faster networking; faster DRAM. This escalating race means that for example, the cost per GB of persistent storage has declined perhaps 30% year on year for the last 20 years (we will look at some real data in a moment).

Price reductions are both a curse and a blessing. On the one hand, every year you’re guaranteed to get more for your money. In storage, the increasing capacity of drives means raw $/GB costs are incredibly low today. To be fair, we’re countering that by storing more data, but at the lowest level, prices are cheaper than ever and on a continuous trend towards zero cost[1].

- On-premises infrastructure – as a service

- AWS Introduces Cheaper, Less Reliable S3 Option

- Should We Worry About The S3 Outage?

- Has S3 Become the De-Facto API Standard?

However, because prices drop so quickly, the overall TCO of a platform makes it cost-effective to replace over a relatively short period. As a result, technology gets refreshed perhaps every 3-5 years, even if it’s not necessary to change[2]. As a side note, “forklift upgrades” are becoming a thing of the past as vendors implement techniques to in-place refresh hardware or make migrations pretty much transparent. This capability will be highly valued in the future.

Cloud Obfuscation

Let’s think about the cost of using technology in the public cloud. CSPs will have benefited both from price reductions over time but also repurposed technology as newer services are introduced. Because the specific implementations are obfuscated from the customer, the CSP can sweat their assets way past any 3 to 5-year refresh cycle.

Now, you could say that on-premises customers could do the same. However, the typical enterprise hasn’t gone through the hyper-growth curve that AWS, Azure and Google have been experiencing over the last decade. When you’re growing massively, it makes sense to focus on new deployments and refresh existing technology only when necessary.

Price Reductions

From 2006 when Amazon Web Services introduced the S3 object storage platform, we’ve seen consistent price reductions in the end-user $/GB cost. That is until four years ago. Since then, the base pricing has remained constant. Instead, AWS has chosen to introduce new features with price breaks for a lower quality of service.

Changing the pricing dynamic by introducing new services (and in some cases discontinuing some) makes it difficult to plot a trend in price reductions. However, base price reductions for standard S3 capacity can be pieced together from blogs and announcements. Some of this data[3] is shown in the table below. These figures refer to US East (Ohio).

| Price per GB | S3 Standard Storage |

| 26/07/2019 | $ 0.023 |

| 16/09/2015 | $ 0.023 |

| 26/03/2014 | $ 0.030 |

| 29/11/2012 | $ 0.095 |

| 06/02/2012 | $ 0.125 |

| 01/11/2010 | $ 0.140 |

| 01/11/2008 | $ 0.150 |

The cost per GB (in this case of the lowest tier) of storage has decreased dramatically over time. But the price of this lowest tier has stayed the same for the last 4 years. So, is AWS passing on the continuous erosion in HDD prices that we expect to see?

Comparison Data

Getting data on HDD prices isn’t straightforward. We have a range of different models and types, from enterprise to consumer drives, performance to capacity. Fortunately, there are a few sources of data available that seem to corroborate each other.

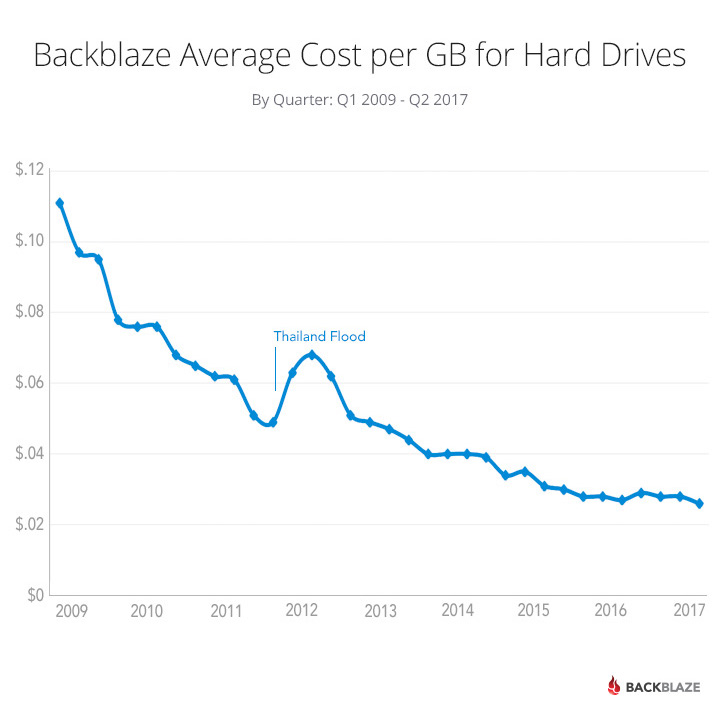

Backblaze has a great blog post from 2017 that shows price erosion and the average cost per GB of HDDs in the Backblaze platform from 2009 to 2017. This is a good comparison source to AWS, as the Backblaze offering (B2) is essentially performing the same job as S3 and will have had multiple tiers of storage over time. The data is shown in figure 2 – please check out the blog for more details.

The second set of data is more comprehensive and looks at drive prices since the hard drive was first introduced (source here). Like the Backblaze data, this graph shows that prices have dropped consistently towards $0.02/GB, except for the blip caused by the Thailand floods in 2011. However, price reductions have slowed as HDD vendors have struggled to increase drive capacities at rates we’ve previously experienced.

Capital vs Operational

What surprised me with this data was the alignment in price between the capital cost of hard drives in $/GB and the monthly charge for S3 ($/GB/month). The two sets of data are very similar. In the early days of S3, AWS didn’t initially (or so it appears) return cost savings to customers to match the decline in HDD prices. The difference, of course, could be the lack of accurate data.

What we can see is that over the course of the decade, AWS has returned similar price reductions to the market, albeit one being capital and one being an operational cost.

Extra Costs

This alignment of costs and pricing raises the question as to how end-users should make decisions on using either on-premises or public cloud storage. AWS needs to cover many additional components in their overall S3 TCO. This will include data centre, networking, power/cooling and staff costs to name but a few. Don’t forget that AWS also charges for data access through egress and I/O operations, so to the end-user, the usage profile of data will impact the consumption costs.

BYOS (Build Your Own S3)

With a 36:1 multiple (assuming a 3-year TCO), does it make sense to build your own object store? Many businesses have famously moved back from AWS, where the costs are deemed cheaper to run on-premises. However, you have to know your own operational processes pretty well and have a good model of costs over time. Enterprises have been doing this for years with internal service catalogues. So, the cost/benefit should already be pretty easy to identify.

The Architect’s View

The conclusions in this post are really only indicative rather than offering a basis to make strategic or architectural decisions. The maths used is lightweight. We haven’t, for example, looked at the declining cost over time versus data growth, which would alter the calculations somewhat. We also didn’t include any purchasing power that AWS undoubtedly has. AWS costs for raw components could be 20,30 or even 40% lower than we’re showing. The aim here is really to show if any trends exist. What we can see is that (at least until recently), AWS was passing savings back to the customer.

With 13 years of data behind them, AWS knows how to price up a service offering that relies on capital expenditure. As we see traditional vendors looking to translate their existing infrastructure businesses into service offerings, there’s a big learning curve ahead for vendors who, until now, have made money on capital purchases. AWS and Azure have both started to move on-premises with hybrid solutions and will have a good idea of what their costs will be in this scenario.

I do wonder how aggressive the infrastructure providers will

(or even can) be, compared to the CSPs.

On-premises as a service is going to be an interesting battleground to

watch during the next 18-24 months.

[1] Note, like the hare and the rabbit in a race, there is a difference between trend and absolutes – the trend is towards zero, even if we never reach it, because drive capacities will continue to increase.

[2] Note, other factors occur here – refresh can be done because of other metrics like space constraints or technology obsolescence.

[3] There have been dozens of price reductions with S3, but AWS quickly remove this data from their blogs, so finding complete data isn’t easy.

Post #2cc3. Copyright (c) Brookend Ltd, no reproduction without permission in whole or part.