Kubernetes is hard, by which we mean challenging to install and manage. So how can we operate a container-based infrastructure on smaller environments or at the edge? One way could be via k3s from Rancher Labs.

Minima

Kubernetes, or k8s as it is frequently abbreviated, is an enterprise-class container management framework. At a minimum, a Kubernetes installation will require a master (management) and worker node that runs applications. However, if you’re building out a production environment, a minimum of three nodes is desirable, in order to manage master failure.

Building out a three-node cluster isn’t that hard, but this represents a minimum configuration. Actual production clusters will scale much higher, depending on the number of applications supported.

In a core data centre or the public cloud, building out multiple nodes isn’t a problem. However, as we look at Edge and IoT requirements, multi-node configurations are wasteful and overly complicated. This makes the trade-off of using a containerised set of applications with Edge/IoT more debatable unless there is another way forward.

K3S

Step in k3s, a much lighter Kubernetes deployment that can be installed on a single node which acts as both master and worker. K3s also supports ARM64 and ARMv7 architectures and so will easily run on a Raspberry Pi or similar device.

I thought it would be fun to take k3s for a spin, so I initially built out a deployment on virtual machines that sit on my Scale Computing NUC cluster. The experience was less than optimal, resulting in a lot of trouble-shooting to work out why I couldn’t fire up a master and multiple worker nodes all talking to each other.

My issues in getting k3s initially working were all DNS related and part of what prompted me to sort the lab environment out. So, if you’re going to try k3s on your infrastructure, make sure DNS is working first.

Second Attempt

My second attempt at installation was much more successful. As figure 1 shows, deployment is as simple as pulling an installation script that downloads and installs the binaries. Within a couple of minutes, you can have a working environment on an x86 server, VM or ARM device. Deployment onto a worker node needs the master key hash, which gets passed to the installation script. Figure 2 shows the result of building out k3s on a mix of Raspberry Pi devices with 12 workers and one master.

Rancher

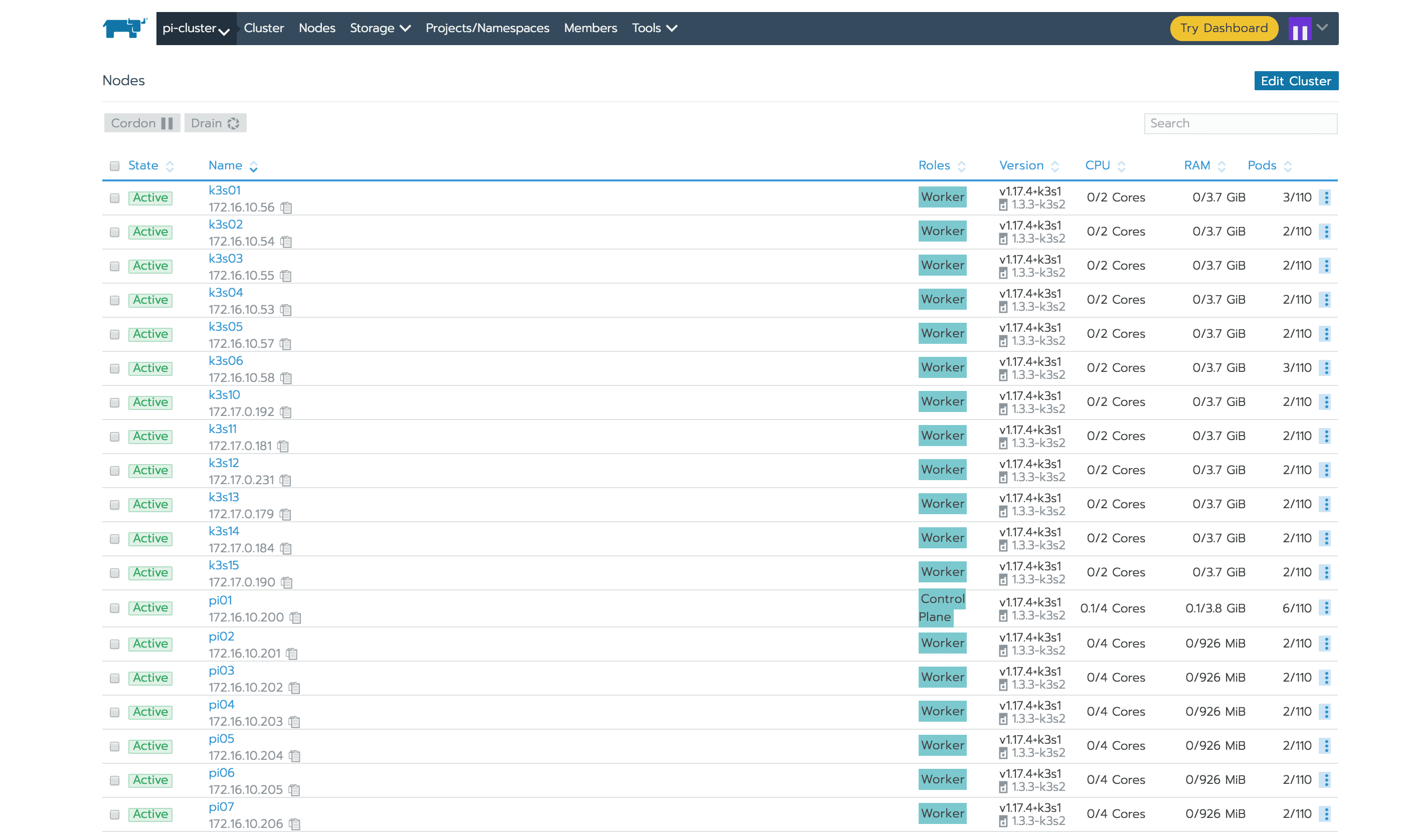

At this point, it seemed like a good idea to try out installing the Rancher Kubernetes cluster management software. As this runs on Kubernetes or Docker, I decided to run Docker Community Edition on one of the x86 nodes and install Rancher onto that. Figure 3 shows an expanded cluster, with nodes across both local and remote data centres, all configured to a single master. This picture is the view from within the Rancher GUI.

PoC

As a Proof of Concept, k3s works efficiently to provide a quick and straightforward Kubernetes infrastructure. Once DNS was sorted, the second installation attempt worked flawlessly. More importantly, the installation was easy enough to execute remotely. This makes it trivial to, for example, ship an IoT device and have k3s running in minutes. Of course, this is a simple PoC, and more detailed testing would be needed to see how this plays out in a more complex environment. It’s worth noting, for example, that the installation doesn’t support a second master, so the loss of that node is pretty catastrophic. However, that’s not a problem with the use cases for k3s.

The Architect’s View

K3s is a simple Kubernetes deployment that could be used for IoT, Edge or training purposes. The ability to run on a single $35 Raspberry Pi (or even a VM running on a laptop), means anyone can get started learning Kubernetes technology. This has to be a good thing. I’m looking forward to experimenting more and seeing what else I can run across this infrastructure.

Copyright (c) 2007-2020 Brookend Limited. No reproduction without permission in part or whole. Post #3ed8.