Businesses thrive on data, and increasingly that data is being generated and used at locations outside of the core data centre. Edge and remote office locations need fast and reliable computing, including storage. How do we design and manage modern edge systems to meet the demands of the modern enterprise? In this post, we look at how the IBM FlashSystem 5200 offers another way to develop an edge computing platform.

- IBM FlashSystem 5200 Deep Dive

- IBM FlashSystem Review – Part 1 – Hardware

- IBM FlashSystem Review – Part 2 – Software

- IBM FlashSystem Review – Part 3 – Ease of Use

Background

Arguably, the “edge” is not a new concept. Businesses have always taken advantage of remote data collection and processing, whether through sensors, specific data collection processes or operating branch offices. However, the change experienced in recent years has been one of speed and time to market. Competitive advantage is driven by the ability to respond to customer demands in near real-time, pushing technology ever harder to deliver results. Modern edge solutions need to be more than data collectors by also processing and filtering data before any centralisation occurs.

The ability to support edge requirements is a balancing act comprising multiple variables.

- Cost – solutions must be cost-efficient, as deployments could run into hundreds or thousands of locations. Every percentage point shaved off the price of hardware and software translates into significant savings for a highly distributed business.

- Availability – systems need to be highly available, firstly to meet the needs of the users, but also to reduce the time and cost of management. Every site visit to fix failed equipment represents a higher support cost overall.

- Manageability – by this, we mean multiple aspects. Systems should be remotely managed, provide in-place upgrades with automated and centralised monitoring.

- Functionality – solutions must be able to deliver the features needed by the unique edge demands while at the same time providing multiple flexible options and configurations that operate in a standardised way. This includes being able to scale without completely rebuilding the solution.

It may come as a surprise to learn that most edge technologies are simply repackaging of data centre hardware, incorporating networking, servers, and storage. Solutions can be “ruggedised” for deployment in locations that don’t offer the traditional stability seen in core data centres. This deployment choice can be helpful, as edge and core systems can be aligned, if the vendor offers scalability across their offerings.

Edge Design

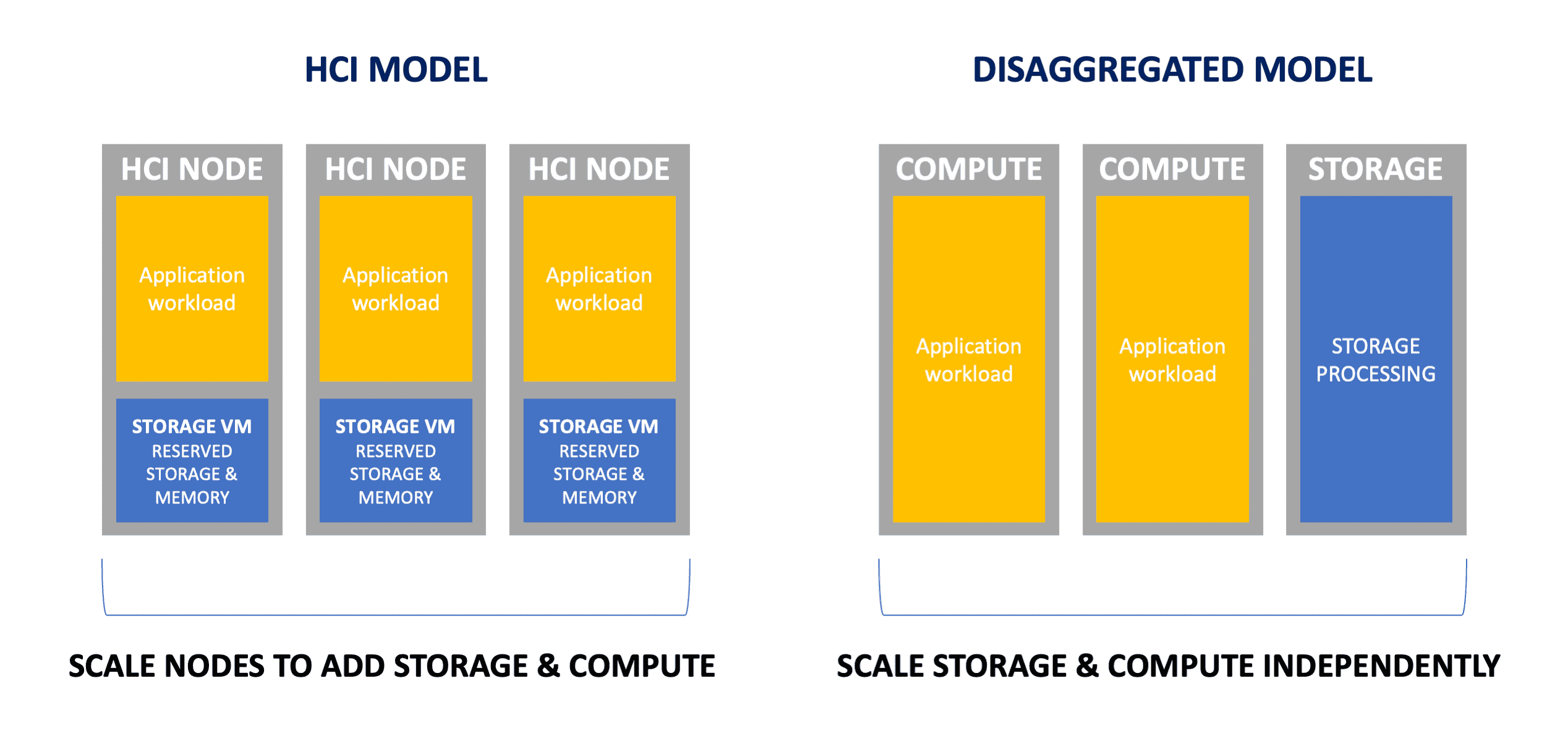

One big challenge in designing edge or remote solutions is whether to implement dedicated or embedded storage. To meet the needs of a simplified support model, many vendors developed consolidated solutions using converged or hyper-converged infrastructure. The CI model simply packages components into a single rack, while HCI runs storage as a subcomponent of the hypervisor or as a virtual machine appliance.

HCI has some interesting advantages that traditional storage and servers couldn’t initially address. In a resilient configuration of (for example) three HCI servers, storage is spread over each node in a resilient model. This design increases availability and improves performance. A comparable server and storage solution could require two or three servers and a dedicated storage appliance. HCI (initially) had better scaling granularity, either at the disk or server (node) level.

At first inspection, HCI might seem like the obvious solution for edge deployments. However, there’s no free lunch in computing. The processor and memory that would usually be dedicated in a bespoke storage appliance will use a chunk of each server in an HCI configuration. This could result in a requirement to deploy additional servers to meet the computing needs of edge applications.

One argument applied to justify HCI in this kind of configuration is that the storage overhead (whether in a VM or part of the hypervisor) is less than that of a dedicated appliance because cores and memory can be used more efficiently. That supposition is simply not true, as most (if not all) HCI implementations will dedicate resources to ensure consistent storage I/O performance.

Offload

Another aspect of the hardware difference between HCI and dedicated storage is the ability to offload storage-intensive functions. In HCI platforms, the cores used for storage are shared with those for applications. Any use of processing to perform encryption, compression or deduplication is made at the expense of applications. Dedicated storage doesn’t have that challenge, as resources are dedicated, but more importantly, a separate physical platform allows for additional capabilities to be added.

In the FlashSystem 5200, for example, IBM implements compression and encryption on FlashCore modules. A FlashCore module (FCM) differs from a traditional SSD or NVMe drive by providing increased drive capacity – up to 38.4TB raw capacity (per drive) or 87.96TB effective, with compression. FCMs also offer greater endurance than traditional TLC NAND drives, at around two drive writes per day.

Form Factor

Finally, we should consider the environmental aspects of deploying remote and edge equipment. Most edge environments will not be tier 4 data centres but tier 1 or 2 computer rooms. Space, power, and cooling will be at a premium, so deployed solutions need to be effective in all these areas. The 5200 operates at a typical 525W (with 12 drives), compared to the 7200 (2U), which is specified at 2000W. Both systems offer similar features and functionality (see post 1 in our analysis of FlashSystem).

Serviceability is also essential. The FlashSystem 5200 form-factor design provides hot-swappable capabilities for drives, power supplies and compute modules, making maintenance a relatively simple task.

Software

Moving on from the hardware discussion, at the edge, software capabilities play a critical role. Systems need to offer in-place and remote upgrades, remote support and monitoring, alerting and self-healing capabilities. These features are essential to maintaining a high degree of availability and service uptime. Support and maintenance across a fleet of hundreds or thousands of deployments is a costly business. Therefore, this work is best achieved without site visits and from a centralised location where the work may be rolled out in a controlled manner.

FlashSystem platforms offer a choice of GUI, API or CLI management. This enables customers to integrate systems management within in-house solutions. Alternatively, IBM offers Storage Insights as a SaaS tool to monitor and manage a FlashSystem fleet. The Pro option of Storage Insights adds additional alerting and data tiering capabilities.

Whether using dedicated storage or not, efficient fleet management across a widely deployed infrastructure becomes a critical requirement. At small scale, reactive alerting is helpful to detect and resolve problems. At much larger scale, reactive analysis isn’t sufficient, so solutions need to offer proactive information on failures, capacity growth and other potential issues. Storage Insights provides all these features, plus the ability to apply the same monitoring and management process to equipment in the data centre.

One final point to consider. Some degree of data mobility is needed between core and edge systems. Application code will require distribution. Data needs to flow in both directions. When systems use the same consistent replication technology across edge and core, data and code distribution is simplified and more efficient.

Lifecycle

Let’s step back and look at the lifecycle of edge deployments. Typical infrastructure management goes through the following steps:

- Design

- Test and Deploy

- Operate

- Maintain

In our discussion so far, we’ve highlighted the challenges of good design, deployment, and operations. Once systems are in place and supported, we must consider capacity management for application growth, additional performance demands and the growth of storage capacity.

In HCI solutions, the capacity management process for both applications and storage are in lockstep, as storage is deployed on each node. New capacity is brought into the system with additional servers or replacing nodes. In a server/storage solution, both components scale independently. Storage capacity and performance may be increased without impacting servers or any outage. Similarly, application servers may be changed without affecting the storage component.

There is no right or wrong answer in deciding how systems should scale, other than to consider what each option offers. Node scaling for capacity, for example, may result in the purchase of unnecessary hardware. Alternatively, the option to simply swap drives in a storage solution that already has the horsepower, could offer a more efficient choice.

The Architect’s View™

Converged and hyper-converged infrastructure have been offered as alternative designs for edge computing, where dedicated server and storage components are seen as an expensive overhead. However, over the last decade, storage solutions have standardised and become remarkably efficient, driven in part by the adoption of NAND flash and NVMe. Ironically, some vendors have introduced the dHCI or disaggregated HCI concept as a way of overcoming the storage/compute lock-in that HCI introduces. We’ve come full circle, back to the model of independently scaling storage and compute.

The ability to deploy dedicated storage at the edge only works if the platform is efficient, can be managed remotely, and has lightweight physical serviceability requirements. FlashSystem 5200 meets all these needs. We’re interested to see how businesses will use the FlashSystem 5200 to build edge and non-core solutions for a dispersed enterprise. We see this platform as a strategic opportunity for IBM to gain a leadership foothold in the growing edge market.

Copyright (c) 2007-2021 – Post #3e31 – Brookend Ltd, first published on https://www.architecting.it/blog, do not reproduce without permission. This work has been produced with sponsorship from IBM.